Your private AI workstation.

Exclusively for Mac.

Build visual workflows, search thousands of documents, and train your own agents. 100% local. 100% private.

Build visual workflows, search thousands of documents, and train your own agents. 100% local. 100% private.

Turn PDFs, notes, and contracts into your second brain. Our lightning-fast, native vector database (ObjectBox) searches your knowledge in milliseconds.

No Electron. No browser clutter. Built with Flutter and directly linked to the Apple Metal API. EIDOSDynamics efficiently utilizes every core of your M-chip.

Your data never leaves your Mac. No server uploads, no subscriptions, no cloud analytics. All API keys are encrypted directly on the nodes.

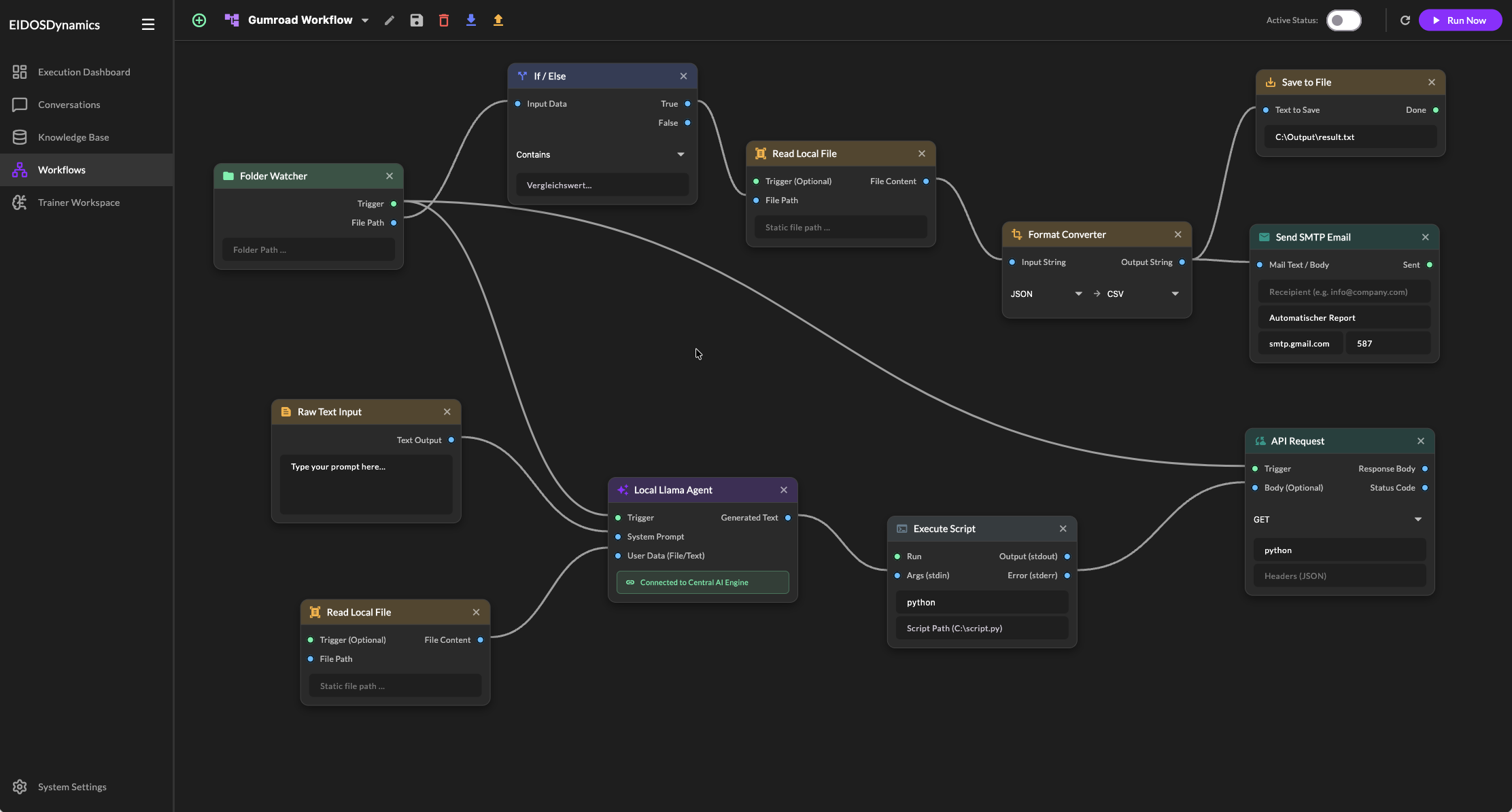

Programming is a thing of the past. Create complex AI processes entirely visually. Thanks to the native Spotlight menu, you'll practically fly across the canvas.

Test the Community Edition for as long as you want.

Upgrade if you need the full performance.

Perfect for testing the local AI magic on your Mac.

Unlimited performance for power users, researchers, and creators.